CASE STUDY

AI or Human Text — Which Performs Better in SERPs?

AI or human text: which one performs better in SERPs? The results of our latest case study may surprise you.

THE RESULTS

If AI or human-generated text performs better in SERPs.

Publish an AI-generated piece and a human-written piece.

Human piece outperforms the AI piece.

When it comes to artificial intelligence versus humans, we aren’t interested in who wins the apocalypse (although we bet on whichever team Schwarzenegger is on). We’re interested in a topic far more pressing: which party is the better writer? We know we aren’t alone in wondering, either, with OpenAI’s darling ChatGPT taking the world by storm since its public release in 2023. Many praised the advanced AI model for opening up new opportunities to scale production. Many human content creators, however, worried about being replaced by mere algorithms. We had to know if there was any truth to these fears, so we decided to test run if AI or human text would do better in search engine results pages (SERPs).

The Problem

AI-written text vs human-written text: which is better? To answer that question, we decided we needed to put some pieces out into Google to see which would come out on out.

Here is how that process went.

First, we had to find a topic to write about. Digital Strike – Targeted Marketing has long cared about the well-being of older adults and their families. It’s why we created Caring Advisor, a free-to-use directory that helps seniors and their families find senior living communities that work for them. Caring Advisor is also an invaluable tool for senior living communities themselves, as our directory does not sell leads back to communities; Caring Advisor just gives communities the leads they earned for free. Needless to say, our years of work in the senior living industry have given us plenty of experience in this topic, so it seemed the perfect place to settle on our topic for this case study: senior living SEO.

We wanted our team to create two long-form pieces that rank for the keyword “senior living SEO.” One piece would be AI generated, the other human generated. Both pieces of content would be long form and act as guides for senior living communities wanting to implement best SEO practices for their communities online. We would release them both into the wild, wild wasteland of Google and see which one would rank (or if either would rank at all), what position the pieces would reach, and how long the ranking process took.

Here are the parameters we set for our study:

- We would not get any direct links from third-party sites.

- We would not place links from website navigation (minus the potential link from a sitemap update).

- We would manually index both pages on Google Search Console.

With these parameters in place, our AI team went to bat first.

The Solution

Our AI-Generated Content Process

URL: https://www.digitalstrike.com/senior-living-seo-guide-guide/

Publication date: 10/05/2023

Number of links in copy: 7

Length: 6289 words

Number of images: 0

Tools used: ChatGPT

FAQ section: Yes

Our SEO team used the generative AI tool ChatGPT (GPT-3.5) to create a senior living SEO piece. Here is the basic process our team had feeding prompts into the AI system:

- Our team started with this prompt: “I want you to act as a content writing expert that speaks and writes fluent English. Create long-form content outline in English. The content outline should include a minimum of 20 headings and subheadings. The outline should be extensive and cover the whole topic. Use fully detailed subheadings that engage the reader. The outline should include a list of frequently asked questions relevant to the topic. Please provide a catchy title for the article. Don’t write the article, only the outline for writers. The title of the article is: Senior Living SEO Ultimate Guide.”

- After creating the outline, we sent in the following prompt: “Great now answer and fill out all the words/content so it’s the best article I can use ever, thank you! Please write in English language.” ChatGPT struggled to write such a large piece of content all at once, so the team switched strategies and asked ChatGPT to fill out each section one by one.

- After writing each section, the team sent over prompts asking for catchy headlines and metadata.

- After getting this information for Google Search, the team asked ChatGPT to write about our company (with a few different prompts) so we could include a section about our company’s reliability in the final copy.

Once our SEO team determined that ChatGPT had written as much (and as well) as it could, the project was handed over to our content team for review. The team spent about 1 hour reviewing the piece, which included placing some internal and external links and reorganizing some of the content.

The AI-generated text went live on October 5, 2023, and our team later updated the piece on January 16, 2024, to include a table of contents to improve user experience.

Our Human Writing Process

URL: https://www.digitalstrike.com/industries-senior-living-marketing/

Publication date: 12/13/2023

Number of links in copy: 24

Length: 3431 words

Number of images: 11 (including header image)

Tools used: Clearscope, Canva, TinyPNG

FAQ section: No

Our human writers knew they had their work cut out for them. They utilized Clearscope to discover the keywords they would need to make their content catch the eyes of Google. Our team then got to work writing the content, drawing on their experience with senior living to create the piece, highlighting the important practices they learned. Since that experience resulted in a hefty piece of content, they created a user-friendly table of contents to assist human readers wanting to jump around the page and varied sentence structure to improve flow.

Besides crafting text, our team also created unique images and graphics to add to the page. They utilized both Canva (to create the images) and TinyPNG (to compress the images in order to boost site speed). All images include both relevant image titles and alt text.

The human-written content went live on December 13, 2023.

The Result

Our AI writing started out strong. Published on October 5, it immediately started ranking on page #1 in Google for the term “senior living community lead generation.” On October 6, it reached position #5 on Google desktop for “senior living SEO.” We noticed that this piece eventually reached spot number 1 on Google desktop by October 28. The piece then bounced between there and spot number 2 consistently for several weeks. On October 6 on mobile, this piece sat at #1 and featured a snippet for “senior living SEO.”

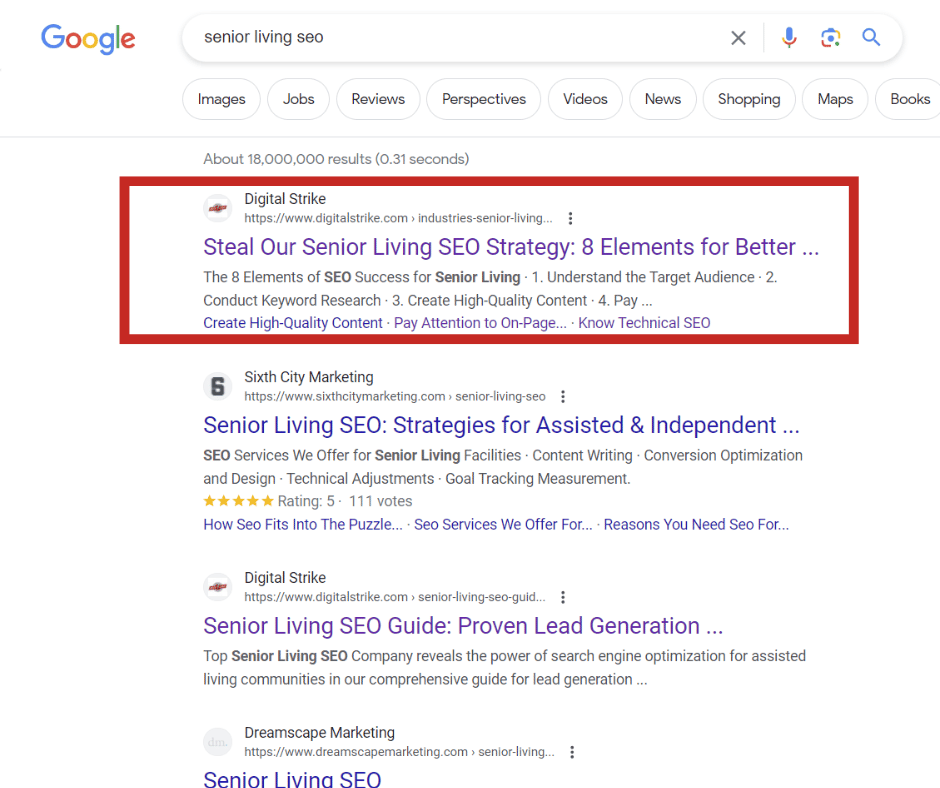

Our human piece went live on December 13, 2023. It started to appear in spots #11 and #12 just 4 days after it was posted. The next day, it hit spot #9, reaching the first page of Google, all within less than a week. By January 9, 2024, our team noticed this piece had jumped to spot #3 on desktop. By January 15, 2024, our team noticed that our human-written piece had unseated our AI piece on desktop, with the human piece reaching position #1 and the AI piece being pushed down to #2.

At the time of writing this case study (02/06/2024), our human piece sits at #1 on both Google desktop and mobile for our target keyword “senior living SEO.” The AI piece sits at #3 on Google desktop and #4 on mobile for the same term.

So, when it comes to AI vs human content, it appears that the human touch really does make a difference. That said, human-like content created by AI technology can also do well on Google, but it still requires human review and a little rewriting to reach these heights.

Limitations of Our Study

It’s important to note a few key weaknesses of our study. Perhaps the three largest factors that limit our study are the publication date of our pieces, their URL structures, and the limited scope of our study.

- Different publication date. Our AI piece went out two months earlier than our human piece, so it’s hard to factor in just how much time plays a role in rank when comparing the two pieces.

- Different URL structure. Our pieces were not filed under the same location on our site; our AI piece is a page, while our human piece lives on our blog. Whether this URL structure plays a role in rank has yet to be determined; we believe a future test run with these factors controlled for would help us better understand whether they played a major role in our study’s outcomes.

- Limited scope. The single-largest limiting factor in our study? The size! We only compared two pieces of content competing for the same keyword. While we plan to run more tests down the road (and hope you stay tuned for those results!), we know we can’t form any definitive conclusions off this case study alone.

Will Search Penalize AI Content?

AI is popular, but some have worried that using any AI-generated text on their website will mean penalizations from Google and other search engines. If you’re one of these folks, put your worries to rest. At least for now, AI is here to stay on Google. The tech giant has previously said that, so long as the content is high quality and does not abuse Google’s Terms of Service/spam guidelines, it will not penalize a site for hosting AI-generated content.

How Useful are AI Content Detectors?

Google may not care if a site hosts AI content, but educators, journals and news sites, and others might, especially when concerns of plagiarism are involved. Unfortunately, for shorter pieces, one study shows that human can struggle to distinguish between human and AI content creation. Human detection does get a little better when the pieces are longer, however, as AI can struggle with accurately writing longer-form content.

Since it can be harder for humans to determine what’s made by humans or algorithms, it may be more accurate (and faster) to use AI content detection tools to catch AI-generated text. Some popular AI checkers include:

- Copyleaks – Can detect content written by GPT-4, ChatGPT, Bard, human, or a combination of human and AI content. The free version is available in English, but upgraded features can check content in other languages. Copyleaks is a combination tool that detects AI and also includes a plagiarism checker and their new Grammar Checker API.

- Undetectable.ai – This tool is a little different from others on our list. It not only detects AI text, but also has a tool to “humanize AI text”… in other words, it helps AI text mimic human text better, helping it bypass other AI detection tools.

- GLTR (Giant Language model Test Room) – This tool is the result of collaboration from minds at Harvard NLP and MIT-IBM Watson AI Lab. It was only trained on GPT-2, so it may not be accurate at detecting content created using GPT-3, Bard, or GPT-4.

It’s important to note that while these tools offer great functionality to people like educators, they are not foolproof and have reported issues with delivering false-positive results.

The Conclusion

Advanced algorithms versus caffeine-addicted writers. The matchup of the century. Here is who came out on top: people! Our human-written content outranked our AI-generated piece in about a month. While our AI piece still sits comfortably on the front page of both Google mobile and desktop, ChatGPT didn’t do all the work; this piece still required plenty of input from human writers, editors, and SEO experts.

Our conclusion? When it comes to AI or human text in this case study, humans reign supreme in terms of rankings. That said, AI certainly has its place in content creation, especially in the creation of short or mid-sized pieces when your team is in a time crunch or wants to scale production. AI cannot fully replace humans, however. We prefer to think of AI more like a tool in your toolkit rather than the new coworker who’s going to steal your job.

Disclaimer: The AI piece has been updated since the time of this case study’s publication.

“Quote goes here”

– Name, Title

There's more where that came from:

Fundraising Brick

Fundraising, Nonprofit

13 Months

Lead Generation

Paid Search

With a few simple tweaks of a budget, we opened up a new avenue for growth in paid search, resulting in leads growing over 330% for our client in just a few short months.

Hyper Fiber

Fiber Internet Provider

12 Months

Boost Organic Visibility

Organic SEO

Through a strategic, SEO-focused digital marketing campaign complemented by targeted ads, we grew HyperFiber’s keyword rankings from just 2 to 1,156 in one year, making organic search the largest contributor to new customer orders.

St. Andrew's

Senior Living

12 Months

Lead Generation

Digital Marketing Mix

Through a multi-pronged digital marketing mix and full SEO campaign, we drove a 45% increase in organic traffic and a 70% growth in first-page keyword rankings, helping St. Andrew’s win valuable leads directly rather than relying on third-party sites.