If you’ve spent any amount of time in the digital marketing industry, you know just how crucial search engine optimization (SEO) is for the success of your websites and campaigns.

Despite how important SEO is, we often hear how daunting it can be, even for a senior marketing manager. SEO seems like such an abstract concept because there are so many layers and factors that can affect performance… and those layers and factors are constantly changing. It’s not as simple as managing pay-per-click (PPC) campaigns where you can literally pay for higher placement if you’re not seeing the results as expected. (Okay, not that simple. But you know what we are getting at.)

So, just how do you become familiar with SEO (and maintain your sanity while doing it)? Keep reading to learn more about technical SEO and the best technical SEO audit tips you can implement right away.

What is Technical SEO?

One of the ways we like to introduce business owners to SEO? Presenting it as being composed of three equally important pillars that will empower growth. These three pillars are:

Many people are familiar with on-site SEO, or on-page SEO. On-page SEO includes content optimization, such as using target keywords in your copy, updating meta tags to include buzzwords, and using an SEO-friendly URL structure. While absolutely critical for success in its own right, you need to complement this type of SEO with technical SEO.

Technical SEO includes any task that is completed within the framework of your actual website. It therefore includes concerns like website crawlability, page load speeds, whether you have duplicate pages, and much more.

Performing a technical audit on your site can reveal areas for improvement, and may be one of the best ways to improve your website for both human users and search platform-ranking algorithms.

Fortunately, it is easy enough to perform a technical SEO audit and boost your site’s ranking on search platforms like Google.

Step-by-Step Guide for In-Depth Technical SEO Audits

The truth is, there is no single best way to optimize a website, only the best way for your website’s unique needs while keeping in mind the tools and resources you have at your disposal. Taking these variables into account, you can craft an auditing strategy that elevates your website in Google’s eyes (and rankings).

Whether your website operates as an affiliate marketing opportunity or an ecommerce sales driver, technical SEO tactics can make or break your visibility online. Our SEO strategists rely on the following quick but effective tactics to outrank the competition and help grow our clients’ businesses.

1. Run a Website Crawl

Crawling isn’t just for spiders. It’s also for robots on the web. These robots serve an important function: reviewing your website and storing (aka indexing or caching) it in a search platform’s database. This way, when a user enters a query into a search platform, the platform’s algorithm can quickly draw an organized list of the most relevant content from its database.

The downside to caching and indexing, however, is that sometimes search platforms will store outdated versions of your website, meaning that users won’t find the latest and greatest content on your site.

That is why it is imperative to frequently have crawlers (also referred to as robots or bots) review every nook and cranny of your website, even if your website doesn’t have many pages. You can rest assured that Google and other platforms see only the latest version of your site, or you can quickly detect and fix any errors that prevent your site from indexing and appearing to search users. In other words, frequent crawling can help you avoid a drop in traffic or visibility due to technical issues and crawl errors.

Use crawlers like Googlebot or Bingbot to find errors that include dead pages (error 404), broken links (links no longer work), no indexing, hacking, server accessibility issues, downtime, and more. Link breaks, image errors, and page removals happen all the time; that’s why we crawl and review the website every week, so we can keep everything running as smoothly as possible.

Tools that we at Digital Strike like for this tactic include:

2. Make the Most Out of Your Crawl Budget

Crawler bots are busy. There are nearly 2 billion websites online right now. These robots are expected to crawl every URL on the internet, which means they don’t want to waste their time with unnecessary crawls of useless pages. That is why most search platforms enact a crawl budget, or a limit on how many pages on your site their crawlers will review every day.

To make the most of your crawl budget, try the following five easy actions:

- Make your important pages indexable (use rel=index tags).

- De-index PPC landing pages.

- Keep your number of pages to a minimum by reorganizing similar content into what we refer to as “power pages,” i.e., long-form, authoritative, comprehensive, and informational content.

- Use noindex tags on pages that are not as important, so Google bots don’t use up your crawl budget on content that will never get to the first page of Google (e.g., About Us pages).

- If you have pages that shouldn’t even be found via organic search (e.g., admin pages) or that you otherwise do not want crawled, use disallow tags.

3. Index the Correct Version of Your Website

On occasion, website updates mean that servers begin serving the wrong version of a website to users and search platforms, such as serving the site with HTTP status instead of HTTPs. It is therefore important to manually verify the most accurate version of your website is indexing on your chosen search platform, or you could run into issues.

If both the HTTP and HTTPS versions are indexed, for instance, both URLs could be considered duplicate content. Search engines despise duplicate content and penalize them, meaning you want to avoid these issues as much as possible. Duplicate content issues aren’t as common these days, as Google bots get smarter and understand the purpose of different content. That said, these errors could still occur if you do not run a manual site audit and review similar content on occasion.

The following URL inspection and testing tools can help you check the index status of your site:

4. Optimize Your Sitemap(s)

The devil is in the details, especially when it comes to websites. Your sitemap is essentially the blueprint of your entire online operation, telling search platform algorithms how they should interact with your site (and how they should present it to users). Even a small error on your sitemap can significantly impact site performance, meaning that sitemap optimization and cleanup is incredibly important for ranking high in SERPs.

There are two types of sitemaps, XML and HTML. Your XML sitemap is for crawlers while your HTML is for human users. You can have one or both types for a single site.

To optimize your XML sitemap, consider the following:

- Exclude nonimportant (rel=noindex) pages from the sitemap, which also helps you get the most of your crawl budget. (A win-win!) Then, include your XML sitemap in your robots.txt file (the file that tells crawlers what pages they should or should not crawl).

- Use plugins that automatically generate XML sitemaps for you when using website-building platforms like WordPress.

- Ensure URLs on your sitemap include canonical tags to avoid confusing the bots with complicated redirect chains (and make sure these canonical URLs are not pointing to a page that is redirected).

To optimize your HTML sitemap, try the following:

- Update your HTML sitemap when needed, since it cannot automatically update the way XML sitemaps can.

- Keep the number of links on your HTML sitemap to less than 100.

- Add keywords in your meta descriptions, meta titles, and anchor texts (we’ll discuss this in further detail below).

5. Update Page Title, Meta Descriptions, and H1 Tags

When the opportunity arises, improve clickability via optimizing headers, title tags, and meta descriptions while improving keyword importance via H1 tag recommendations. In other words, find a target keyword and closely related terms and use them throughout not just the body copy of a page, but also within proper H tags and meta descriptions. Making these changes might seem tedious, but trust us—the effort is well worth the results you are likely to see.

Keep in mind, meta descriptions are not a factor that Google looks at for rankings; they are more to entice human readers to read your content or buy your product. By testing out different verbiage within your metadata, however, you can find the right mix that increases organic search traffic by using persuasive copy to entice users to enter your website (and reduce bounce rates).

6. Track Keyword Rankings, Impressions, and Queries

Analyze trends in rankings, impressions, and queries via Google Search Console to identify optimization opportunities and indexation issues. If you are tracking your keywords and noting any substantial changes, then you can tell if/when an indexation issue arises.

Did a keyword that you typically rank highly for drop off within the search engine results pages (SERPs)? Chances are you have an indexation issue or you could potentially be a victim of Google’s algorithm updates.

You cannot judge a proper SEO strategy without tracking organic search performance. There are several keyword rankings and audit tools available for these types of tracking. At Digital Strike -Targeted Marketing, we like the following:

(FYI: These are great tools for keyword research as well.)

7. Evaluate Your Organic Search Traffic Trends

Throughout the month, you should evaluate traffic trends in Google Analytics to analyze overall search engine performance from a traffic volume standpoint. If there are specific web pages that are seeing an increase in traffic (specifically from organic search), it should go without saying that you’re doing something right. (Good job!)

But what if you’re seeing the opposite? (It’s okay! Take a deep breath.)

Check for any technical SEO issues on important pages first if you’re not seeing the volume as expected. If you’re not the webmaster, your company should consult a digital marketing agency that specializes in SEO.

8. Sculpt Authority with Links

Most digital marketers know the importance of external links/backlinks. But they aren’t the only types of links that matter when it comes to SEO.

Ensure web crawlers know what page is the authority on a given topic and improve authority through internal linking opportunities. Many SEO agencies fail with link-building campaigns by not considering the importance of internal links and proper anchor text usage.

If you want a particular page to rank highly in the search engine rankings you need to mold the authority by strategically placing internal links that point from other highly authoritative pages on your website to similar topical content you have. Boosting your homepage’s authority is a great first start for increasing organic traffic, for example. Do so by having different pages on your website point to your homepage… and have relevant links (with keyword-rich anchor text) to those pages as well. This practice helps you create a healthy ecosystem of interlinking on your website that shows search platforms your site is robust and should therefore rank higher in SERPs.

9. Use Social Media

Don’t roll your eyes at us.

Social media can help your business thrive.

Yes, really.

For starters, using social media to push your content helps increase organic traffic, brand awareness, and creates backlinks to your website (both through the link you share in your original post and any shares you get from followers). Additionally, platforms like Google even crawl certain social media sites like Facebook, meaning that a well-optimized and popular Facebook post could show up in Google SERPs.

So don’t let your social media marketing strategy fall to the wayside or think of it as a completely separate enterprise from SEO.

10. Prioritize the Mobile Version of Your Site Over the Desktop Version

More and more people are forgoing desktops to perform searches. Now, the majority of people use mobile devices to conduct searches. That is why Google actually uses mobile-first indexing practices (aka Google prioritizes mobile versions of your site for search and indexing purposes).

One quick way to see if your site is friendly for mobile users? Use Google’s Mobile-Friendly Test tool.

11. Run Site Speed/Page Load Time Tests

It should come as no surprise that Google and other search platforms consider website speed to be an important SERP ranking factor.

A slow page load time typically insinuates a poor user experience, and search platforms are all about serving the best user experience (more on that below). How slow is too slow? More than three seconds, according to Google research.

That means you need to keep your site and page load speeds at or below three seconds to reduce the likelihood of high bounce rates. You should evaluate load times both independently and against the competition. This approach means you can better determine if improving site speed should be on your priority list.

There are several tools that both test site speed and provide a list of improvements. We prefer to use Google-produced tools, such as Google PageSpeed Insights.

12. Optimize User Experience

Aside from website page speed, there are several other factors that search engines note as ranking factors, such as mobile-friendliness, usability, accessibility, and efficiency.

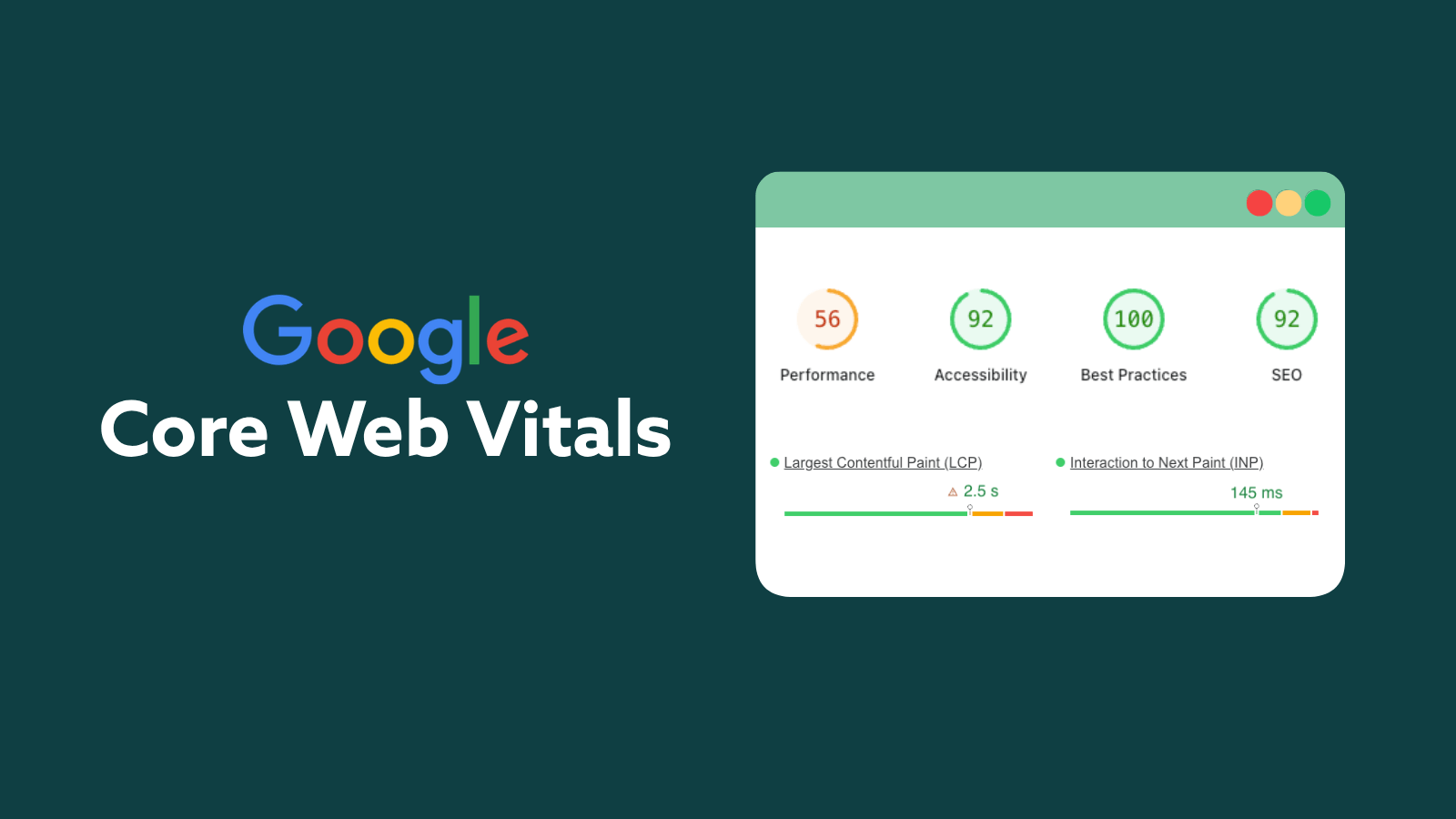

Simply put, search platforms reward you when you keep things simple. Good news: most platforms give you the tools to help you do just that. Google, for instance, provides several tools to increase your scoring on the Core Web Vitals report, which will tell you if your website provides a good or poor user experience.

The better the user experience, the higher your website will rank on the SERPs.

Let’s talk about Core Web Vitals a little more.

In May 2020, Google announced Core Web Vitals as a new way to judge the user-friendliness of a website. This update officially rolled out in 2021, establishing metrics that affect visibility in the SERPs.

The Core Web Vitals report ranks your website’s status as Good, Needs Improvement, or Poor for the following metrics:

- Largest Contentful Paint (LCP) – The time it takes for the largest content to show up in full view on screen, starting from when the user clicks the link or types in the URL. This content is typically a hero image or video on the homepage. Google recommends your pages’ largest content show up in less than 2.5 seconds to be in good standing.

- First Input Delay (FID) – The time it takes for your website to respond to a user’s action on your site. For example, FID is the time it takes for your site to navigate to an internal page when a user clicks on an internal link. Google recommends less than 100 milliseconds between actions on-site.

- Cumulative Layout Shift (CLS) – The amount of change between the current layout to a new page layout between navigation. Think of this metric as counting the time between jumping from your service page to a blog. If a page is changing its template design while a user is trying to interact with it, it is considered to be a poor user experience. If it is a large upheaval, Google will score your changes between 0-1, 1 being the most shifting and 0 being the least. Google recommends a score of less than 0.1.

After March 12, 2024, Interaction to Next Paint (INP) has replaced First Input Delay (FID) as a core web vital metric type. INP, according to Google, is more comprehensive, and takes into account how much time it takes for a site to process and display all user interactions, not just the first.

How to Prioritize Technical SEO Audit Action Items

Every technical audit will look different. Expertly craft yours based on the following three factors.

1. Budget

Technical SEO requires several tools and a lot of patience to comb through every page and every line of code on your website. And, to be honest, it’s complicated.

Online SEO tools and software or hiring real people to handle larger projects is sometimes the best way to fully optimize your website. But these resources aren’t always cheap. This fact means you will have to pay for not only the tools and time but also for the knowledge and years of experience any hired SEO strategist has put in to quickly assess your website or troubleshoot issues.

2. Age of Website

Older websites typically have more authority in the eyes of Google than newer websites do. And more authority means a better chance to rank higher in SERPs. Technical SEO changes also usually show results much more quickly on a well-established site than a newer one. So, if your website can easily be optimized, then optimize what you already have.

Unfortunately, not every website is salvageable. In these cases, starting over from the ground up and making a new site with a stronger foundation is the solution your brand needs.

So frequently do we hear new clients complain about our initial suggestion, “time for a new website.” We promise we’re not just saying that to sell you a new website—that’s slimy and that’s not Digital Strike.

The fact of the matter is that websites these days are different from those of the past. Technology has changed, making the launch of a brand-new website quicker and easier than ever. You’d be surprised how starting over can improve your current website’s performance!

3. Time

How much time you have is perhaps the single-most important resource you have to consider during an audit. More time means you can comb through the site for nitpicky details. Less time means you have to focus on only the big-ticket items like page speed and redirects.